This post originally appeared on HERE360.

What do you get when you combine automobiles, location technology and artificial intelligence? Improved design, consumer interaction and unique driving experiences.

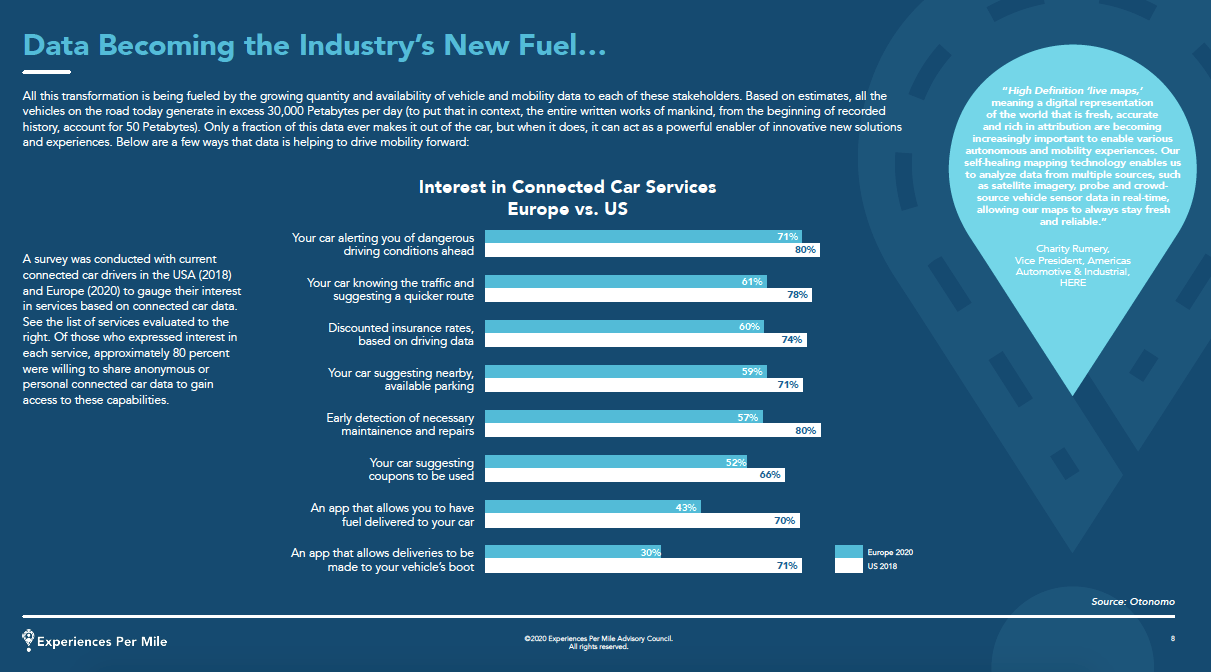

Data and artificial intelligence (AI) are transforming the automotive sector and with it, what we expect from driving. According to the Experiences Per Mile Advisory Council (EPM), consumers are anticipating increasingly personalized and connected experiences.

Car manufacturers looking to distinguish themselves recognize the value in using AI to gather and analyze data, but also the advantage of combining AI with location technology. Using location-enabled AI can help automotive brands create unique content for their current and future clientele. This includes future AI-powered in-car assistants that can understand and learn drivers' preferences to do everything from suggesting parking options and favorite amenities like gas stations to providing efficient (re)routing and supporting users with discounted insurance rates.

Cerence, a HERE partner, is combining HERE Location Services with AI to find new ways of connecting with their specific customer base.

The company is a spin-off of Nuance, a leader in voice-recognition for twenty years. They were the first company to provide speech recognition in cars in the 1990s and as Cerence is now the global industry leader in creating unique, moving experiences for the automotive world. They use voice-enabled AI and location technology to help improve how drivers use their vehicles, including safety features, by enhancing contextual awareness and individual preferences.

HERE360 spoke with Christophe Couvreur, Cerence's Vice President of Product, to get some insight into exactly how AI functions and improves the driving experience.

“We work collaboratively with the big tech companies. We are not competing with them; we are complementing them... You may say, 'Hey Mercedes, drive me back home,' or 'Hey Siri, call my mom.' We have the technology to support both... it's about letting the user decide whether to use the car AI or the phone AI.” - Christophe Couvreur, Cerence

Using an AI in-car assistant can be as simple as a dialogue between you and the car, or it can involve combining the speech input feature with different AI-powered technologies that unlock an abundance of functions and capabilities. Upcoming innovations include the ability for AI to recognize users' physical gestures. For example, the user can simply point at an object or location outside of a moving vehicle to find its name, business hours etc.

Couvreur: “AI is an a very broad term... in terms of an AI assistant, or Voice Personal Assistant (VPA), AI plays a variety of roles. The one that most people are familiar with is speech input and output. The ability to recognize what people say to the AI system... using machine learning technology... and then converting the speech to text, understanding the text and turning that into an intent... This is the first step in an AI system. Then the system has to decide what to do with that intent or query from the user... In its simplest form it's a dialogue:

'Call Jasmine.'

'Okay, do you want me to call her cell?'

'Yes.'

'I will start the call.'

...Then there is an interaction back with the user often using text to speech, making the system speak to the user, with a computer voice. These are the classic bits and pieces."

Essentially, the AI assistant has to be able to converse, as any human would, in order to engage and help the user.

How does the AI assistant learn? What kinds of things does it learn about and what kind of data does it need?

“You can view learning at two levels. One is learning about you, the driver, and the other is to learn about the state of the world, inside and outside of the car. For instance, you want to refill your [gas tank] and you like to stop at Shell stations because you have a [Shell Station] fuel card... You've been using the [AI] system and every time it finds gas stations you pick Shell, the system has learned your preference and will prioritize that response. That's an example of learning about your preference.

But it can also learn about the world including your car. So if you ask about the nearest location of gas stations and the next Shell is 200kms away but due to the low gas status of your car you only have 50kms of range left... the AI will offer an alternative that is within range."

What are two examples of AI assistants in relation to navigation and Point-of-Interest (POI) databases? How does this improve the driver/user experience?

“The classic example of this... is called Destination Entry. When you want to go somewhere you have to identify your destination, you can do that by typing in your address or typing in a name and searching for it in the POI database. You can also do that by voice saying, 'Navigate to 1 Westside Road Bellington, or by saying Find the nearest McDonald's.' The AI would then put the address in the system and find the geo-coordinates as a navigation target. If you are [looking for the McDonald's] then the AI would search the POI [database] for something called McDonald's. It will find a few locations and propose them to the driver."

Of note is the ability for AI assistants to receive and respond in multiple languages, making international navigation seamless.

“We integrate deeply with the car and car-related data. If you bring your iPhone with Siri into your car, Siri does not know much about your car. We [Cerence] provide a deeper level of integration,” states Cerence's Vice President of Product, Christophe Couvreur.

Centered on voice - and going beyond it

Are there any less apparent benefits for drivers interested in using AI assistance?

“The most obvious one is safety. Being able to speak instead of typing addresses [into a head-unit keyboard] is safer [while in-car]. What people will sometimes not realize is that in fact it's often easier for certain types of queries: voice is a more natural interface and even if there is no safety risk, you may still prefer to use voice.”

Location enabled AI assistants can also be useful when POI databases have medical center and hospital data, making locating healthcare easier.

AIs are getting smarter by combining human voices with information about the world around them. This makes data less detached, more contextual and specific to the individual behind the wheel. Pointing at a roadside attraction for example requires the AI system to recognize your specific hand movement, combine it with voice recognition and associate that information with geodata and previously recorded personal preferences.

AI offers users the ability to engage with technology as they would with any other real-life, human assistant who gradually gains knowledge about you and makes relevant suggestions ahead of your in-car requests.

“Cerence is not just about voice-command...we are focused on providing a great user experience in the car that is as seamless and natural as possible. You don't need to learn special commands, you can behave with the car as you behave with a human assistant. The AI assistant should be smart enough to learn about you, about the car, about the world... to provide you with the right information," Cerence's Vice President of Product, Christophe Couvreur.

Find out how well your car knows you with HERE Connected Driving.