Hannover Messe 2026: Agentic Physical AI Finds Its Interface

By Kaivan Karimi, Business Development Senior Director

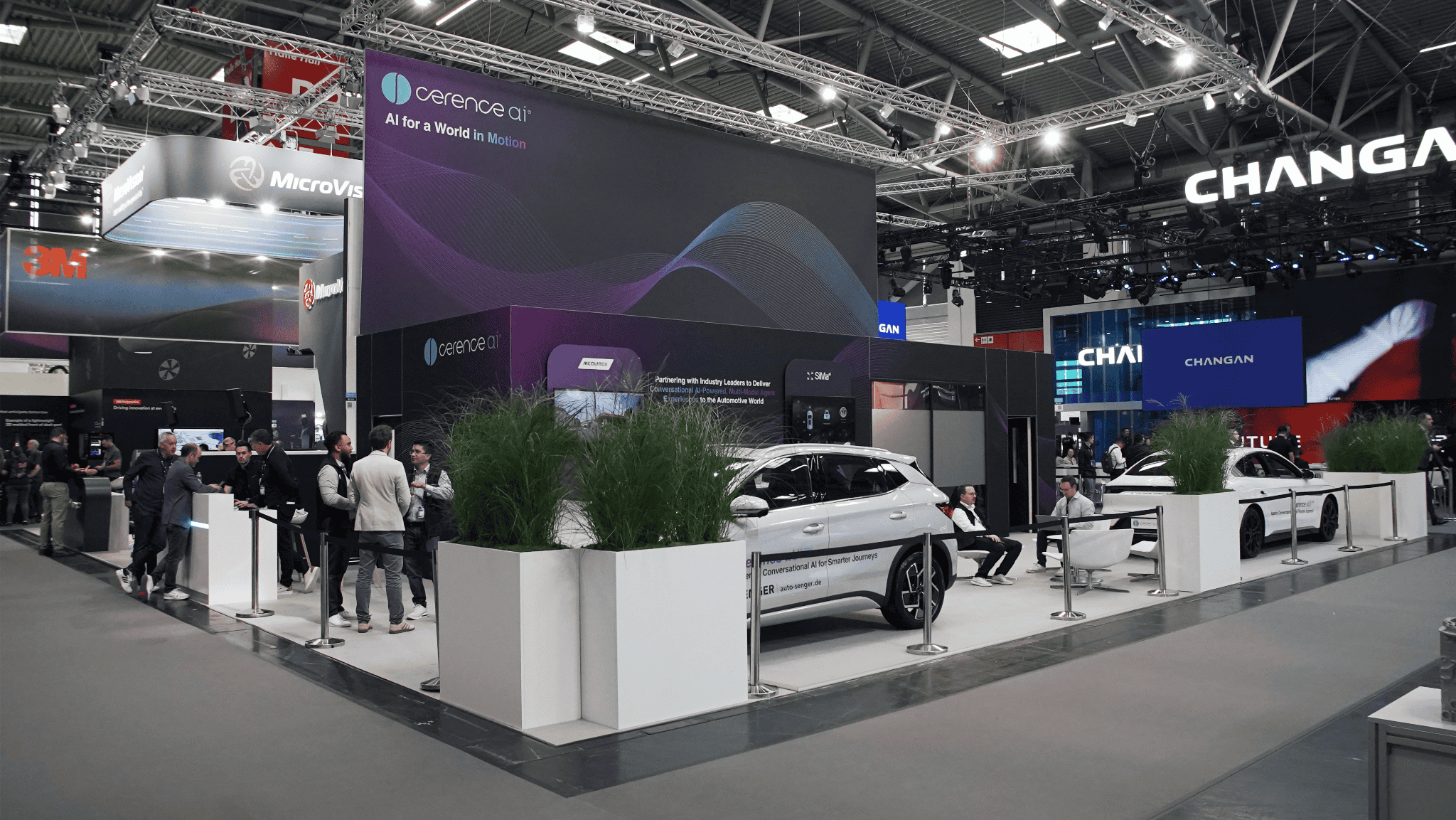

Hannover Messe is widely regarded as the world’s leading trade fair for industrial technology, bringing together manufacturers, automation leaders, energy providers, and software platforms to showcase the latest innovations driving industrial transformation. With over 3,000 exhibiting companies, the 2026 edition made one thing unmistakably clear: AI is no longer confined to dashboards and copilots – it is being embedded directly into machines, factories, and physical workflows.

Alongside our partners Microsoft and NVIDIA, Cerence is helping shape how AI moves from innovation to production. Across mobility, manufacturing, and other infrastructure‑heavy environments, we work closely with our partners and customers to bring conversational and agent-based AI into real-world systems where reliability, security, and scale are non-negotiable. Events like Hannover Messe provide a valuable lens into how AI is being deployed in environments like manufacturing and logistics where failure is not an option.

Agentic AI Has Left the Lab

Across Hannover Messe, agentic physical AI moved from concept to execution. Multi-agent systems that plan, decide, and act took center stage across robots, material flow, and factory orchestration.

The event marked a shift from assistive AI to execution-capable agents, with a focus on operational readiness. Exhibitors pointed to deployments already working inside various constraints, including noise, safety interlocks, regulatory oversight, and latency-sensitive loops. Our partner NVIDIA’s framing fit what we saw at the show: AI is becoming the operational layer of manufacturing, not an add-on analytics function.

In operational AI, the hardest problem is not “reasoning,” but making actions fast, safe, and explainable in the moment amongst all the “real world noise”. That’s why Cerence AI focuses on resilient, industrial-grade voice AI as the conversational gateway between humans and autonomous systems including physical AI, where identity, compliance, and auditability matter.

Digital Twins Are Becoming Decision Engines

Digital twins are no longer static models or visualization tools. At Hannover Messe 2026, they increasingly acted as decision engines – feeding real-time context into agentic systems that can reroute production, adjust quality thresholds, or initiate maintenance autonomously.

This shift matters because it collapses the gap between monitoring and action. Simulation, sensing, and execution now operate as a single loop – often spread across edge and cloud infrastructure from partners like Microsoft Azure and NVIDIA.

Human-in-the-Loop Is Not Optional

Despite the excitement around autonomy, one truth kept resurfacing: fully removing humans is neither realistic nor desirable in most industrial settings. Instead, real progress is happening in human-in-the-loop architectures where voice acts as the “human-in-the-loop control plane” for agentic physical systems. In regulated environments that are noisy and hands-busy, voice is the most natural interface for approval, override, exception handling, and escalation in human‑agent workflows (especially when AI shifts from suggestions to actions).

At the show, traceability, governance and operational readiness were recurring motifs. Understanding who issued a command or who approved a change is more important when AI executes on its own. Voice interaction patterns can be designed to support structured confirmations and audit-friendly logs in regulated environments.

In manufacturing, energy, and logistics, trust is earned through observable, attributable, and compliant (both safety and security) interaction. Operators need to know what an agent is doing, why it is doing it, and how to intervene when real‑world conditions change.

Safety and security go hand-in-hand, which is why we partner with Microsoft to deliver best-in-class enterprise level security both for agents integrated with our xUI hybrid agentic framework and for agents integrating with third-party agents, all grounded in Zero Trust security principles. Based on our collaborations with Microsoft, Microsoft Entra ID and Entra Agentic ID will enforce identity for humans and agents; privileged actions will be governed through role‑based and privileged access controls; devices will be continuously managed and attested via Microsoft Intune; and data usage will be protected and audited through Microsoft Purview.

Together, these layers form a compliance‑aware trust fabric where every command is authenticated, every action is authorized, and every outcome is traceable. This is what makes agentic AI deployable at scale in environments where safety, security, regulation, and accountability are must-haves and where humans need to stay “in the loop.”

In operational AI systems, trust is no longer a property of the model alone. It is a property of the architecture, the interface, and the security controls that bind them together.

Resilient Voice Infrastructure

As AI systems move off screens and into the physical world, traditional interfaces break down. Touchscreens, keyboards, and mobile apps are poorly suited to environments where hands are busy, eyes are occupied, ambient noise varies, and response times are critical.

This is why resilient voice – meaning a voice system that holds up in demanding, real-world operational environments – is emerging as a core interface for physical AI, robotics, and smart spaces.

Voice is uniquely positioned to connect human intent and machine execution when AI must behave deterministically. It allows operators to query, command, interrupt, and confirm actions in real time without breaking physical flow. But this only works if voice performs reliably under pressure: high noise, multiple speakers, accents, variable connectivity, and strict latency budgets.

That distinction between simple voice and resilient voice matters. In controlled demos, many voice systems perform well. On factory floors, in logistics hubs, or alongside autonomous machines, only battle-tested voice AI holds up.

I explored this idea in more depth in a prior post, “Voice Is the New Infrastructure,” where I argued that once AI becomes operational infrastructure, its interface must meet the same standard of robustness, safety, and compliance.

The Bigger Signal

Hannover Messe 2026 made it clear that agentic physical AI is real, it is scaling, and it is entering environments where mistakes carry real-world consequences. In that world, interaction is no longer a UX afterthought. It is a system-critical component.

Resilient voice sits at the intersection of autonomy and accountability. It keeps humans meaningfully in the loop while allowing AI agents to operate at machine speed. And as physical AI systems proliferate across factories, robots, and intelligent spaces, voice is increasingly becoming the interface that makes agentic AI usable, trustworthy, and deployable at scale.